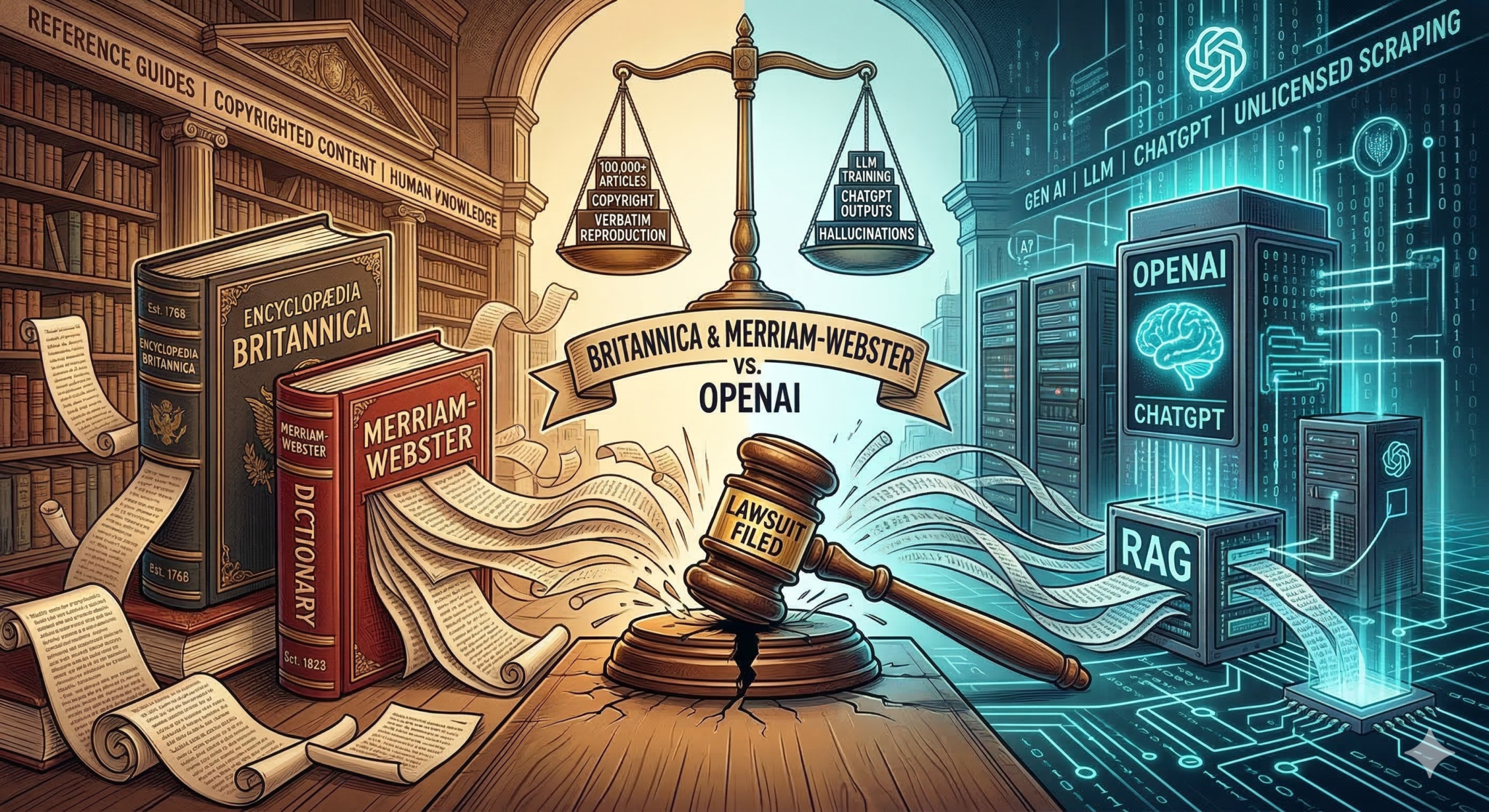

When was the last time you typed ‘Merriam-Webster.com’ into your browser to look up a word? Most people today simply ask an AI chatbot for the definition. It’s fast and efficient, but according to a new lawsuit from Encyclopedia Britannica and Merriam-Webster, this shift in habit is built on massive copyright infringement.

The publishers have taken OpenAI to court, stating the company scraped nearly 100,000 of their articles to train its models and is now producing verbatim copies for users.

If we look past the legal filings, this situation shows a practical issue with how the internet operates today. It is a debate over how reference material is funded and distributed.

What This Means for the Future of Dictionaries

To understand Britannica’s position, let’s imagine you have invested a fortune launching satellites to build a highly accurate weather application. You let people check the forecast for free on your phone app, supported by banner adverts.

Then, a separate hardware company releases a popular new smart speaker. Instead of paying for a commercial API key to access your data legally, the speaker’s software simply scrapes your entire website every time a user asks “what’s the weather like today“, reading your exact data aloud. The user never opens your app, meaning you earn zero ad revenue to maintain your satellites.

Occasionally, the speaker suffers a software glitch: it warns the user of an active hurricane that actually expired three years ago, because it is pulling from your archived records instead of your live feed, and incorrectly cites your weather app as the source.

This is exactly what Britannica and Merriam-Webster are fighting for. The lawsuit argues that OpenAI is reducing its revenue by offering a direct substitute for its content. Furthermore, they accuse OpenAI of violating trademark laws—specifically, the Lanham Act—by generating false information (hallucinations) and incorrectly attributing those errors to Britannica.

In the world of reference publishing, where reputation is the only currency that matters, this kind of false attribution is a serious threat to any brand’s integrity.

The Use of RAG: Why OpenAI Might Lose on This One

A key technical focus of this lawsuit is OpenAI’s use of RAG, or Retrieval-Augmented Generation. This is the process where an AI model scans the live web or external databases to fetch up-to-date information before constructing a response.

When an AI learns the structure of the English language by processing a dictionary, that is one type of usage. However, when an AI uses RAG to actively pull Merriam-Webster’s proprietary definition to answer a user’s prompt, it functions more like a search engine that bypasses the source entirely. This means the publisher receives no website traffic, resulting in zero ad revenue and zero subscription conversions to fund their editorial teams.

OpenAI is already dealing with similar lawsuits from The New York Times and other publishers. For context on how these cases can unfold, we can look at a recent ruling involving another AI company.

As detailed in the legal filings, Anthropic faced a class-action lawsuit from authors. Federal judge William Alsup determined that using books to train an AI is transformative enough to be considered fair use. However, because Anthropic used illegally downloaded databases rather than paying for the books, they were forced into a $1.5 billion settlement.

If the courts decide that OpenAI’s scraping of Britannica’s proprietary content crosses the line from transformative learning into unlicensed copying, the financial penalties could be substantial.

The Future of Web Publishing

The publishers have a valid point in forcing this issue. We are seeing a structural change in the traditional search-and-click web model.

If AI models can provide exact answers directly within a chat interface, the traditional publisher model becomes financially unviable. Without website traffic, publishers cannot afford to employ the researchers and writers who verify the information. Ultimately, if the original content creation stops, the AI models will have fewer reliable sources to train on in the future.

However, there is a path toward a win-win scenario. If AI companies like OpenAI pay to license these resources and properly acknowledge their sources, human researchers can continue the work that AI relies on to improve. Since AI still fundamentally relies on human-generated data to perform and evolve, a sustainable licensing framework ensures that both the creators and the platforms can thrive.

This lawsuit is fundamentally about establishing a working business model for factual information on an AI-driven internet. We will likely see more of these disputes until tech companies and publishers can agree on a system that values the humans behind the data.